Digital Character AI

Introduction

The interest in conversational digital characters has grown significantly in recent years, spanning industries such as healthcare, education, and entertainment. Thanks to progress in natural language processing and machine learning, these characters can participate in meaningful conversations with users, offering a more engaging and immersive experience. Our research aims to develop data-driven methods for establishing affect-aware conversational agents, speech synthesis, and animations to make interactions with digital characters more natural, engaging, and rewarding. Affect awareness is at the core of all projects. By relying on affective states, interactions with digital characters can be tailored to the emotions of the user.

Topics

Digital Einstein Platform

The Digital Einstein platform is a cutting-edge setup that merges conversational digital characters with a physical environment. This setup enables users to converse interactively with a digital, stylized representation of Albert Einstein, featuring dynamic expressions and body language for a more engaging and immersive experience. Styled to echo the early 20th century, the platform includes an armchair, a carpet, a table, and various decorative items such as a lamp, books, a Mozart bust, and a pocket watch. It also incorporates a screen framed in wood, several speakers, and a media box, all discreetly placed behind the screen. Users can interact with the Digital Einstein using a microphone cleverly hidden in the top book on the table, while a camera concealed in the frame's top scans their movements and reactions. The arrangement of speakers (two in the chair, one below the table, and two behind the screen) creates a comprehensive spatial audio experience. Speech input is processed into text using Microsoft Azure's speech recognition technology.

Digital Einstein 1.0

The software uses machine learning to understand the user's intent and employs a dialog algorithm to generate the most appropriate response. This system is enhanced with motion-captured animations for facial expressions and body movements, along with pre-recorded speech mimicking Einstein's distinctive German accent. The responses stem from a dialog tree, which spans topics such as Einstein's famous theories, his academic pursuits, and his personal relationships. The algorithm introduces a degree of randomness to keep conversations dynamic and unpredictable. For example, if Einstein notices a lack of engagement, he will quickly ask if the user is distracted or uninterested. If he doesn't understand something, he will ask, with both courtesy and humor, for the question to be rephrased.

Digital Einstein 2.0

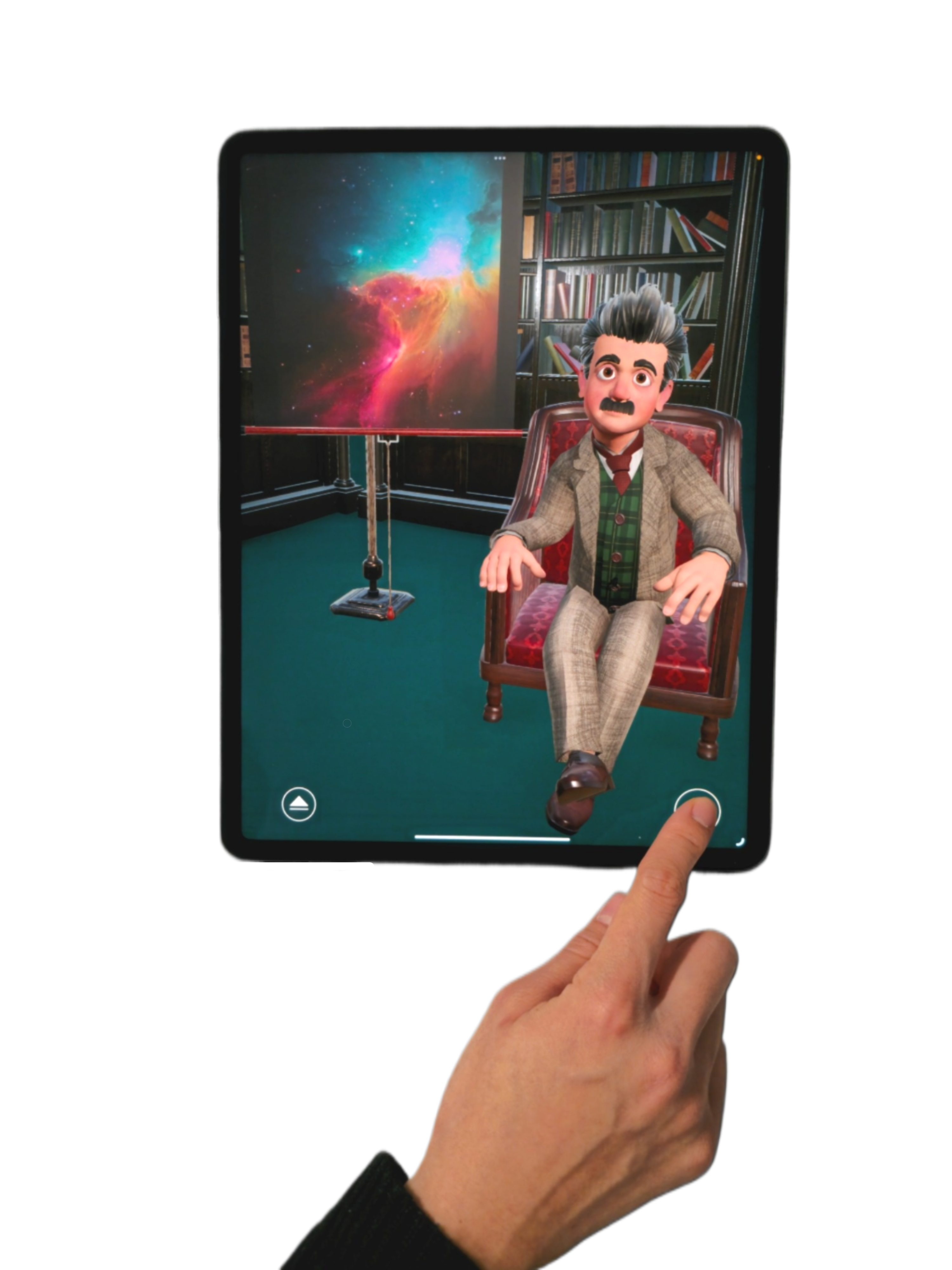

To enhance the conversation's openness and engagement, users have the option to choose between a chatbot based on GPT-4o and another based on Llama-3 8B. We guide these chatbots toward the topics in the dialog tree by using prompt engineering for the GPT-4o chatbot and fine-tuning the Llama-3 chatbot with synthetic Einstein conversations created using GPT-4. User characteristics (age, gender, appearance, re-identification) and behavior (attention) are analyzed from webcam data and utilized by the chatbot during the conversation. The responses are vocally rendered using a neural model from Microsoft Azure, specifically fine-tuned with over 2,000 recordings of an artist emulating Einstein's voice, capable of expressing eight different emotional tones, such as anger, excitement, or sadness. The facial animations are generated by a data-driven model. Corresponding body animations blend motion-captured movements based on the avatar's status (idle, listening, or speaking). Additionally, to visually represent the topic of the conversation, we continually generate and display an image, created using GPT-4o to formulate a prompt and Midjourney to execute the image production. The latest version of Digital Einstein is now also accessible on iPads as a mobile app and on the web at http://digital-einstein.ethz.ch.

Digital Einstein Events

Digital Einstein was showcased at a series of prestigious national and international events. These events provided a platform to engage with diverse audiences, ranging from industry leaders and technology enthusiasts to academics and policymakers. Here are the key appearances of Digital Einstein:

- 27.10.22 - 27.10.22: Swisscom Business Day, Lucerne

- 17.03.23 - 17.03.23: StageOne, TedX, Zurich

- 05.06.23 - 05.06.23: Roche Digital Tag, Rotkreuz

- 15.01.24 - 19.01.24: OpenForum @ World Economic Forum Annual Meeting 2024, Davos

- 21.06.24 - 21.06.24: Nacht der Wissensstadt, Heilbronn

- 14.10.24 - 18.10.24: GITEX Global, Dubai

- 11.11.24 - 12.11.24: Swiss Re, Resilience Summit 2024, Rüschlikon

- 15.11.24 - 15.11.24: Digitaltag, Vaduz

- 03.12.24 - 06.12.24: SIGGRAPH Asia, Tokyo

- 03.04.25 - 03.04.25: Polymesse, ETH Zurich

- 25.05.25 - 27.05.25: TECH Conference, Heilbronn

- 02.06.25 - 02.06.25: Microsoft Initiative to Advance AI Diffusion in Switzerland, Historical Museum Berne

- 20.09.25 - 20.09.25: Zunftbott, ETH Zurich

- 13.10.25 - 17.10.25: GITEX Global, Dubai

- 01.11.25 - 02.11.25: Berlin Science Week @ CAPMUS

- 08.11.25 - 08.11.25: Berlin Science Week @ Musikbrauerei

An ETH Ambassadors Blog Post highlights Digital Einstein's journey to Dubai and Tokyo, providing further insights into these key appearances. Additionally, a second ETH Ambassador Blog Post documents the project's appearances at major venues in 2025, offering a closer look at the system's evolution through hybrid LLM integration and real-time performance optimizations.

Affective Computing

Gaining awareness of affective states enables leveraging emotional information as additional context to design emotionally sentient systems.

Applications of such systems are manifold. For example, the learning gain can be increased in educational settings by incorporating targeted interventions that are capable of adjusting to affective states of students.

Another application consists of enabling digital characters and smartphones to support enriched interactions that are sensitive to the user's contexts.

In our projects, we focus on data-driven models relying on lightweight data collection tailored to mobile settings.

We have developed systems for affective state prediction based on camera recordings (i.e., action units, eye gaze, eye blinks, and head movement), low-cost mobile biosensors (i.e., skin conductance, heart rate, and skin temperature), handwriting data, and smartphone touch and sensor data.

We have also explored the complexities of human-chatbot emotion recognition in real-world contexts.

By collecting multimodal data from 99 participants interacting with a GPT-3-based chatbot over three weeks, we identified a significant domain gap between human-human and human-chatbot interactions.

This gap arises from subjective emotion labels, reduced facial expressivity, and the subtlety of emotions.

Using transformer-based multimodal emotion recognition networks, we found that personalizing models to individual users improved performance by up to 38% for user emotions and 41% for perceived chatbot emotions.

Personality Traits

Understanding and integrating personality traits into conversational digital characters is essential for enhancing user interaction and engagement.

Large language models (LLMs) like GPT-4 exhibit distinct personality structures during human interactions.

In our studies, we collected 147 chatbot personality descriptors from 86 participants and further validated them with 425 new participants. Our exploratory factor analysis revealed that while there is overlap, human personality models do not fully transfer to chatbot personalities.

The perceived personalities of chatbots differ significantly from those of virtual personal assistants, which are typically viewed in terms of serviceability and functionality.

This finding underscores the evolving nature of language models and their impact on user perceptions of agent personalities.

To enhance chatbot interactions, we introduced dynamic personality infusion, a method that adjusts a chatbot's responses using a dedicated personality model and GPT-4, without altering its semantic capabilities.

Through human-chatbot conversations collected from 33 participants and subsequent ratings by 725 participants, we analyzed the impact of personality infusion on perceived trustworthiness and suitability for real-world use cases.

Our work highlights the potential of dynamic, personalized chatbots in transforming user interactions, enhancing satisfaction, and building trust, thereby paving the way for more engaging and applicable real-world chatbot experiences.

Dialog Act Classification

A dialog act is a label that contains information on the semantic and structural function of an utterance in a conversation e.g., inform, question, commissive, or directive. It is also commonly interpreted as the speakers intent at a lower level. Determining dialog acts in interactions with digital characters is essential for enabling agents to understand and respond effectively to user intents, leading to more engaging and seamless interactions. We have developed a system for classifying dialog acts in conversations from multi-modal data (text, audio, and video), which can be leveraged for online applications. Our research further focuses on leveraging intent for character response generation as well as for speech and animation synthesis.

Animation

Data-Driven Facial Animation Synthesis

When replacing fixed dialog systems with LLMs, pre-recorded MoCap does not suffice for animating conversational characters. Instead, the character's lip-sync, facial expressions, eye gaze, gestures, etc. need to flexibly adapt to new speech contents. Hence, we are performing research in the field of animation synthesis, developing deep sequence models for synthesizing animation control parameters on the fly based on the current conversational context.

Emotion-Content Disentanglement of Facial Animations

Equipping stylized conversational characters with facial animations tailored to specific emotions and artistic preferences enhances the coherence and authenticity of the characters. Within this project, we developed a novel Transformer-Autoencoder for disentangling emotion and content (lip-sync needed in speech animation) in facial animation sequences. Our method captures the full dynamics of an emotional episode, including temporal changes in intensity and subject-specific differences in the emotive expression. Hence, our method provides a valuable tool for artists to easily manipulate the type and intensity of an emotion maintaining its dynamics.

Augmented Reality

For an immersive augmented reality experience, it's essential that digital characters operate autonomously and interact dynamically with users. Our project focuses on crafting autonomous agents that can understand their environment and engage users in meaningful, goal-oriented interactions. By integrating LLMs and augmented reality (AR) technologies, users can interact directly with a digital Albert Einstein, gaining interactive and educational insights from his scientific legacy in a contemporary, user-friendly format. This endeavor not only honors Einstein's contributions to science but also repurposes his teachings for the modern digital age.

Autonomous Agents

Our research aims to redefine behavioral standards for autonomous agents, allowing them to function automatically in either casual social interactions or complex tasks. By integrating LLMs with cognitive theories, we demonstrate how agents can mimic realistic behaviors while aligning with human values and expectations. The profiling methods developed in this study will help with character design, enhancing applications in creative writing and film production. Furthermore, the same cognitive framework will be used to develop the agents' theory of mind, improving their understanding of users and thus tailoring the interactive experience to individual needs. This approach will enable creators to develop more nuanced and credible characters, leveraging the frameworks established through this research.

Applications of Digital Characters

We are actively working on applying digital characters in diverse fields, offering innovative solutions for personalized and interactive experiences. In education, virtual teachers provide customized tutoring and support, adapting to students' learning styles and emotional states to enhance educational outcomes. In healthcare, virtual doctors assist in diagnosing conditions, offering medical advice, and monitoring patient progress, making healthcare more accessible and efficient. Virtual psychotherapists provide mental health support through therapeutic conversations and emotional assistance, making mental health care more approachable and scalable. These applications demonstrate the potential of digital characters to revolutionize traditional practices, delivering personalized and effective interactions across various domains.

Funding

The research is supported by ETH Zurich Research Grants and the Swiss National Science Foundation.

Publications

2025

PhonemeNet: A Transformer Pipeline for Text-Driven Facial Animation

The 18th ACM SIGGRAPH Conference on Motion, Interaction, and Games (Zurich, Switzerland, December 03-05, 2025), pp. 1-11 (Best Paper Honorable Mention)

Available files: [PDF] [Video] [BibTeX] [Abstract]

egoEMOTION: Egocentric Vision and Physiological Signals for Emotion and Personality Recognition in Real-World Tasks

Proceedings of NeurIPS (San Diego, USA, December 2-7, 2025), pp. 1-30

Available files: [PDF] [BibTeX] [Abstract]

Steering Narrative Agents through a Dynamic Cognitive Framework for Guided Emergent Storytelling

Proceedings of the AAAI Conference on Artificial Intelligence and Interactive Digital Entertainment (Edmonton, Canada, Nov 10-14, 2025), pp. 1-11

Available files: [PDF] [BibTeX] [Abstract]

A Joint Personality-Emotion Framework for Personality-Consistent Conversational Agents

Proceedings of the 25th International Conference on Intelligent Virtual Agents (IVA) (Berlin, Germany, September 16-19, 2025), pp. 1-9

Available files: [PDF] [BibTeX] [Abstract]

BEE: Belief-Value-Aligned, Explainable, and Extensible Cognitive Framework for Conversational Agents

Proceedings of the 25th International Conference on Intelligent Virtual Agents (IVA) (Berlin, Germany, September 16-19, 2025), pp. 1-9 (Best Paper Honorable Mention)

Available files: [PDF] [BibTeX] [Abstract]

A Platform for Interactive AI Character Experiences

Proceedings of SIGGRAPH Conference Papers '25 (Vancouver, Canada, August 10-14, 2025), pp. 15:1-15:11

Available files: [PDF][PDF suppl.] [Video] [BibTeX] [Abstract] [YouTube]

2024

Immersive Conversations with Digital Einstein: Linking a Physical System and AI

SIGGRAPH Asia 2024 Emerging Technologies (Tokyo, Japan, December 03-06, 2024), pp. 1-2

Available files: [PDF] [BibTeX] [Abstract]

EmoSpaceTime: Decoupling Emotion and Content through Contrastive Learning for Expressive 3D Speech Animation

The 17th ACM SIGGRAPH Conference on Motion, Interaction, and Games (Arlington VA, USA, November 21-23, 2024), pp. 1-12

Available files: [PDF] [Video] [BibTeX] [Abstract]

On Multimodal Emotion Recognition for Human-Chatbot Interaction in the Wild

Proceedings of the 26th International Conference on Multimodal Interaction (ICMI) (San José, Costa Rica, November 04-08, 2024), pp. 12-21

Available files: [PDF] [BibTeX] [Abstract]

Multimodal Dialog Act Classification for Conversations With Digital Characters

Proceedings of the 6th International Conference on Conversational User Interfaces (CUI) (Luxembourg, Luxembourg, July 08-10, 2024), pp. 1-14

Available files: [PDF] [BibTeX] [Abstract]

Chatbots With Attitude: Enhancing Chatbot Interactions Through Dynamic Personality Infusion

Proceedings of the 6th International Conference on Conversational User Interfaces (CUI) (Luxembourg, Luxembourg, July 08-10, 2024), pp. 1-16

Available files: [PDF][PDF suppl.] [Video] [BibTeX] [Abstract] [YouTube]

The Personality Dimensions GPT-3 Expresses During Human-Chatbot Interactions

Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, ACM, vol. 8, no. 2, 2024, pp. 1-36

Available files: [PDF][PDF suppl.] [BibTeX] [Abstract]

2023

Personality Trait Recognition Based on Smartphone Typing Characteristics in the Wild

IEEE Transactions on Affective Computing, IEEE, vol. 14, no. 4, 2023, pp. 3207-3217

Available files: [PDF][PDF suppl.] [BibTeX] [Abstract]

2022

Affective State Prediction from Smartphone Touch and Sensor Data in the Wild

SIGCHI Conference on Human Factors in Computing Systems (CHI '22) (New Orleans, Louisiana, USA, April 29 - May 5, 2022), pp. 1-14

Available files: [PDF][ZIP suppl.] [Video] [Video] [Video] [BibTeX] [Abstract]

2020

Image Reconstruction of Tablet Front Camera Recordings in Educational Settings

The 13th International Conference on Educational Data Mining (EDM) (Irfan, Marocco, July 10-13, 2020), pp. 245-256

Available files: [PDF] [Video] [BibTeX] [Abstract]

Glyph-Based Visualization of Affective States

EuroVis (Norrköping, Sweden, May 25-29, 2020), pp. 121-125

Available files: [PDF][ZIP suppl.] [Video] [BibTeX] [Abstract]

Affective State Prediction Based on Semi-Supervised Learning from Smartphone Touch Data

Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems (CHI '20) (Honolulu, Hawaii, April 25-30, 2020), pp. 377:1-13

Available files: [PDF][ZIP suppl.] [Video] [Video] [Video] [Video] [BibTeX] [Abstract]

2019

Affective State Prediction in a Mobile Setting using Wearable Biometric Sensors and Stylus

The 12th International Conference on Educational Data Mining (EDM) (Montreal, Canada, July 2-5, 2019), pp. 224-233

Available files: [PDF] [BibTeX] [Abstract]